The Rise of AI-Native Clouds: Nebius vs. Hyperscalers

The Shift Toward AI-Native Clouds

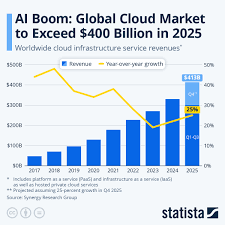

For several years, the cloud computing market was dominated by the "hyperscalers"--Amazon Web Services (AWS), Microsoft Azure, and Google Cloud Platform. While these giants provide vast resources, the specific requirements of Large Language Model (LLM) training and inference have created a demand for a more specialized approach. This is where Nebius has carved out its niche.

An AI-native cloud is designed from the ground up to handle the massive parallel processing requirements of GPU clusters. Unlike general-purpose clouds, which are optimized for a wide variety of legacy enterprise applications, Nebius focuses on the high-performance interconnects and cooling systems necessary to keep thousands of GPUs running at peak efficiency. This architectural specialization reduces latency and increases the throughput of training jobs, making it an attractive alternative for AI labs and enterprises that find hyperscalers too rigid or prohibitively expensive for specialized workloads.

The Hardware Moat and Strategic Execution

Much of the recent stock momentum can be attributed to Nebius's ability to secure and deploy high-end compute hardware. In the current market, access to NVIDIA's latest GPU architectures--such as the H100 and the subsequent Blackwell series--is a primary determinant of a cloud provider's competitiveness.

Nebius has demonstrated an ability to not only acquire these chips but to integrate them into a cohesive environment. The value proposition lies in the "full stack" approach: providing the hardware, the orchestration software, and the networking infrastructure. By offering a streamlined path from raw compute to a functioning model, the company reduces the operational friction for its clients. This execution has translated into increased revenue streams and a valuation that reflects the scarcity of high-performance compute capacity globally.

Market Risks and Scaling Challenges

Despite the bullish trajectory, the path forward is not without volatility. The AI infrastructure sector is characterized by intense capital expenditure. Building and maintaining data centers requires billions of dollars in upfront investment, creating a high-stakes environment where operational efficiency is paramount.

Furthermore, the company faces a dual-threat competitive landscape. On one side are the aforementioned hyperscalers, who have deeper pockets and existing enterprise relationships. On the other are emerging specialized providers fighting for the same slice of the GPU market. The sustainability of Nebius's growth depends on its ability to maintain its technological edge and secure a steady pipeline of high-value clients who are willing to migrate away from the traditional cloud giants.

Key Details of the Nebius Value Proposition

- Specialized Infrastructure: Focuses on AI-native cloud architecture optimized specifically for LLM training and inference.

- GPU Access: Strategic procurement and deployment of high-end NVIDIA hardware to meet surging demand.

- Market Performance: Significant stock price appreciation in 2026, reflecting strong investor confidence in the AI compute vertical.

- Operational Focus: Reducing latency and maximizing throughput via specialized interconnects and data center design.

- Alternative to Hyperscalers: Positions itself as a leaner, more agile alternative to AWS, Azure, and GCP for AI-specific workloads.

As the industry moves from the experimental phase of AI to the deployment phase, the demand for efficient, scalable, and specialized compute will remain a primary driver. Nebius's recent performance suggests that the market is increasingly valuing specialized agility over general-purpose scale.

Read the Full The Motley Fool Article at:

https://www.fool.com/investing/2026/04/18/nebius-stock-has-nearly-doubled-this-year-heres/

on: Fri, Feb 06th

by: The Motley Fool

on: Sun, Aug 30th 2009

by: WOPRAI

ORCL, NVDA, FITB, FLEX, QCOM, QLGC With Highest Daily Short Volume On NASDAQ Yesterday

on: Thu, Apr 16th

by: The Motley Fool

on: Thu, Apr 16th

by: Business Insider

Beyond AI Hype: The Shift to Implementation and Infrastructure

on: Thu, Jan 29th

by: Seeking Alpha

on: Sun, Jan 11th

by: The Motley Fool

on: Tue, Feb 10th

by: The Motley Fool

on: Thu, Oct 15th 2009

by: WOPRAI

OTEX, RYAAY, EME, SYNT, ASEI, IDC Expected To Be Higher Leading Up To Next Earnings Releases

on: Wed, Sep 16th 2009

by: WOPRAI

MSFT, JAVA, ORCL, ZION, JBLU, ADBE With Highest Daily Short Volume On NASDAQ Tuesday

on: Thu, Mar 12th

by: The Motley Fool

on: Mon, Oct 19th 2009

by: WOPRAI

MCD, USB, CTXS, LRCX, APD, HCBK Expected To Be Higher After Earnings Releases on Wednesday

on: Sat, Feb 14th

by: The Motley Fool